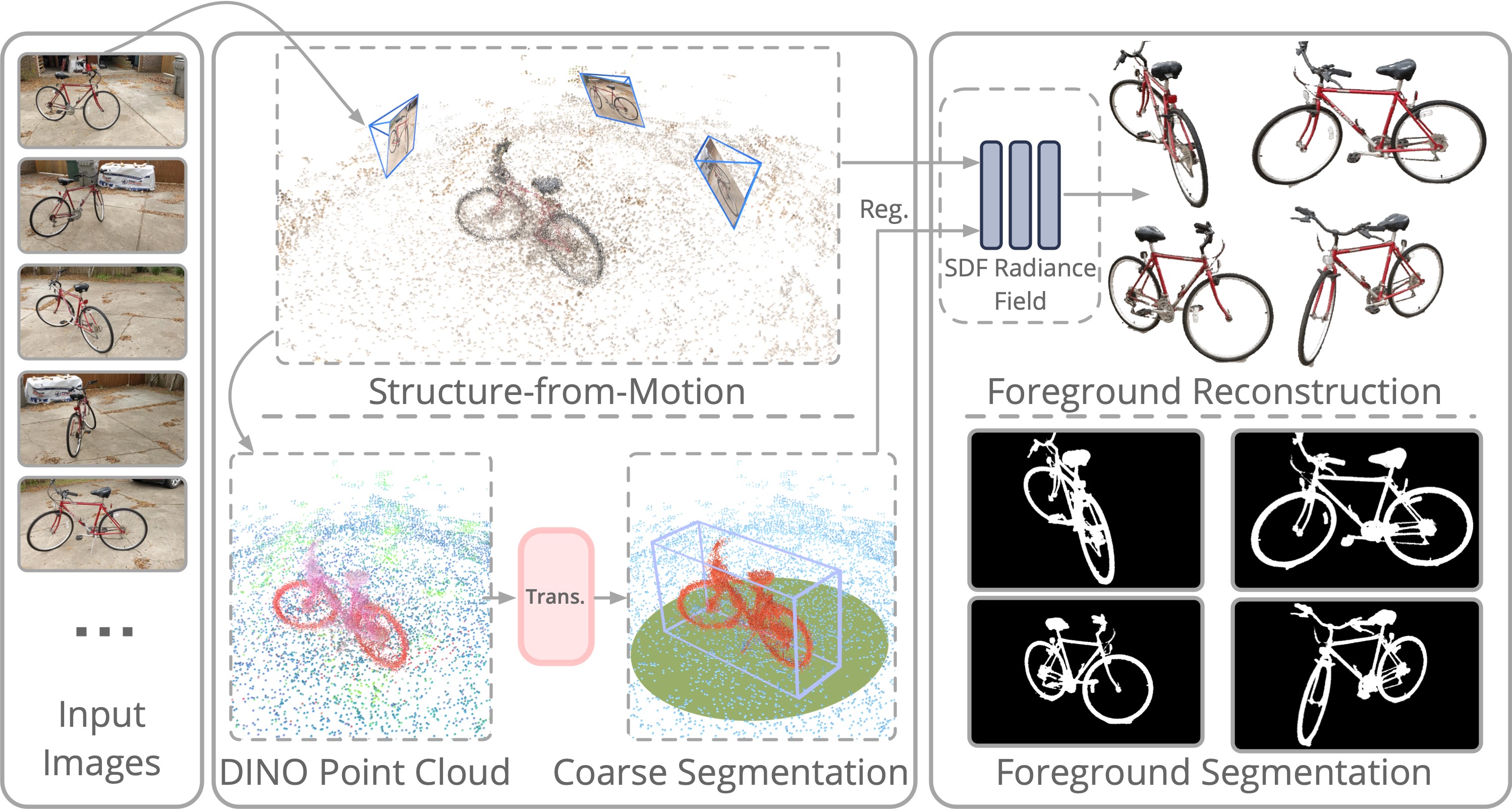

Method Overview

Given an object-centric video, we achieve coarse decomposition by segmenting the salient foreground object from a semi-dense SfM point cloud, with pointwise-aggregated 2D DINO features. Then we train a decomposed neural scene representation from multi-view images with the help of coarse decomposition results to reconstruct foreground objects and render multi-view consistent high-quality foreground masks.