Click the corner brackets to open the full stage breakdown.

@misc{xia2026habitatgs,

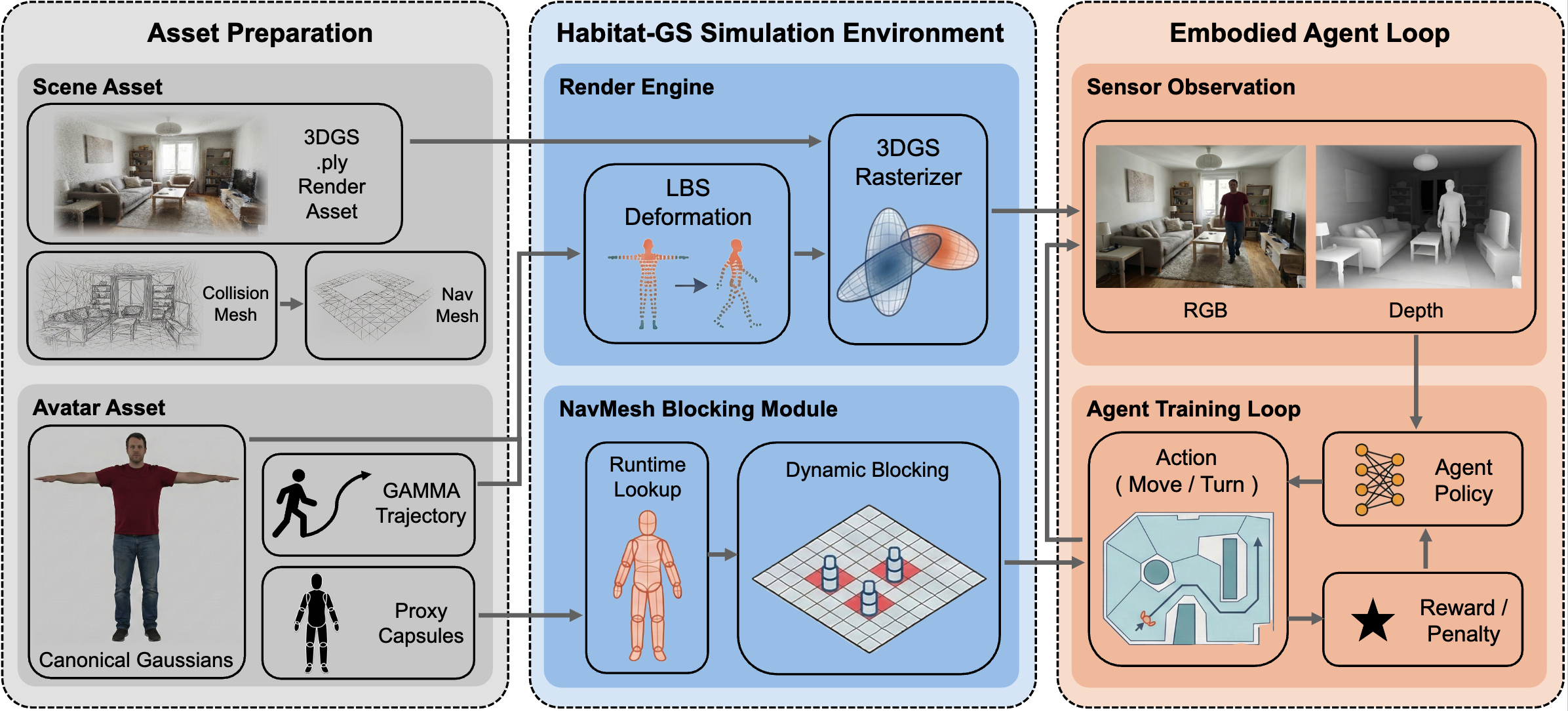

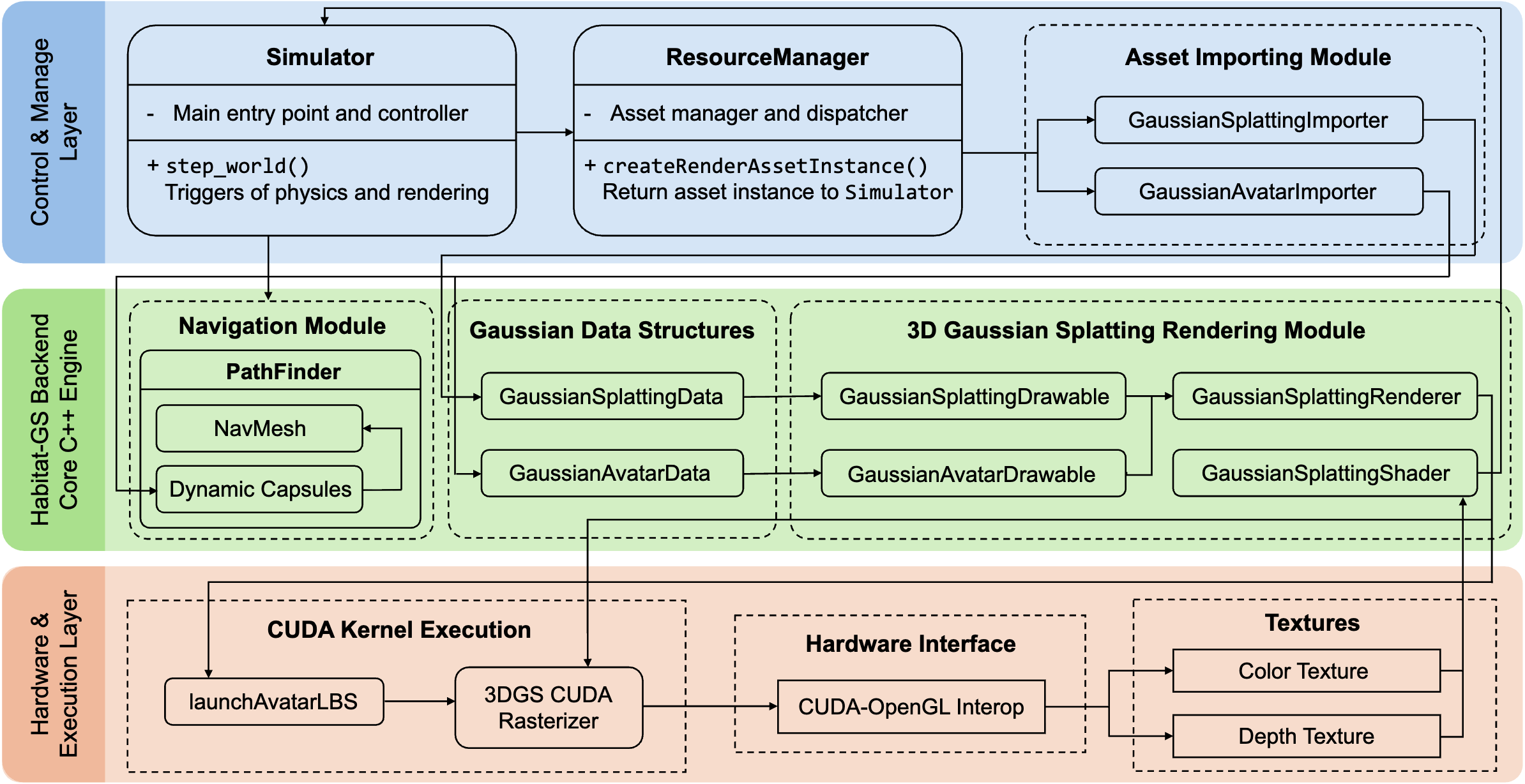

title={Habitat-GS: A High-Fidelity Navigation Simulator with Dynamic Gaussian Splatting},

author={Ziyuan Xia and Jingyi Xu and Chong Cui and Yuanhong Yu and Jiazhao Zhang and Qingsong Yan and Tao Ni and Junbo Chen and Xiaowei Zhou and Hujun Bao and Ruizhen Hu and Sida Peng},

year={2026},

eprint={2604.12626},

archivePrefix={arXiv},

primaryClass={cs.RO},

url={https://arxiv.org/abs/2604.12626},

}